All blog posts ……..Start each work week with the latest issue. Scroll down to subscribe!

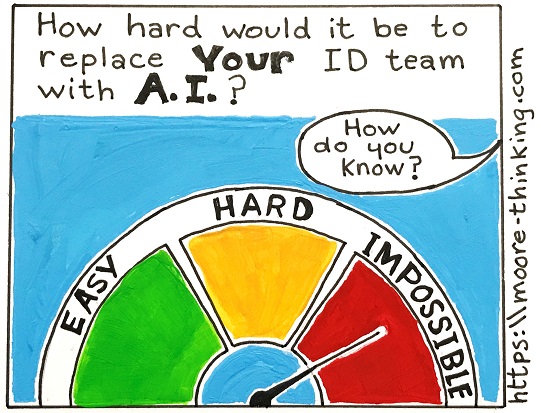

How to AI-proof your training team

Word on the street is that a tidal wave of AI-related corporate staffing cuts is imminent and, perhaps, has already started.

For the bean counters, the lure of the latest, greatest silver bullet—the possibility of replacing teams of professional, experienced writers, trainers, and IDs with a single low-paid contractor named Ralph who’s got ChatGPT all figured out—is obvious.

Wait, scratch that! Maybe Ralph isn’t even necessary. Why not just give learners access to ChatGPT (the way this elementary school claims to do) and let them teach and train themselves? Booya – problem solved! Money saved! Happiness all around! (Well, you know. The folks out of work probably aren’t super happy.)

Of course, if you work in instructional design, technical writing, teaching, training, or any related communication field, you know how silly that sounds for so many, many reasons.

And yet, if history has taught us anything, it’s that reality isn’t always apparent to decision makers.

And the truth is that, in today’s climate, the ability to demonstrate ROI in a way that ties the value of training to specific, irreplaceable activities is our best form of defense against a C-suite that makes staffing decisions based on marketing materials and social media posts.

This article describes 3 common approaches to training: one that’s easy to replace with AI, one that’s hard to replace, and one that’s irreplaceable.

It might be worth figuring out which of the three best describes your organization’s approach and then, if necessary, considering how to level up.

1. EASY to replace: Present some information and distribute a survey.

Presenting information to learners is easy! Everyone can do it, regardless of formal writing background, experience, or training. (Presenting information effectively, though, is another kettle of fish entirely. That does require professional-level writing skills, as described in #2 below.)

Surveys, which capture reactions and opinions vs. facts, are incapable of helping us identify whether the information we’ve delivered has hit the mark—and can’t shed any light at all on whether that information has, or ever will, translate into changed behavior. Technically, this approach isn’t actually training at all; it’s awareness. Because it can and often is done by just about anybody, and because there’s never any meaningful follow-up, there’s little practical downside to letting AI be that “anybody.” In other words, if this is the approach your team is taking, you may be on the radar for downsizing or replacement.

Clues your organization is taking this Kirkpatrick Level 1 approach include:

- No significant change in the field post-”training,” not even anecdotally. Learners keep making the same mistakes in the field after sitting through the presentation as they did before.

- Learners don’t understand the “big picture” and are unable to “think critically.”

- Different departments and teams throughout the organization write and rely on their own documentation. Multiple sources of documented truth in multiple states of currency make training the “right” way to perform a skill or task nearly impossible.

- The training department keeps putting out different versions of the the same “training,” hoping each time that the results will be different.

- A quick survey reveals most employees either don’t know, or don’t agree on, key steps in the “trained” process.

- Supervisors regularly field the same questions over and over about how to perform steps in the “trained” process.

- Employees avoid or resist taking “training” because their experience has taught them it’s a waste of time.

- The training department regularly receives feedback ranging from subtle (rumors) to direct (layoffs) that training isn’t valued at the organizational level.

- In the absence of formal practice and assessment, learners practice on live data and customers, where the stakes are high.

If “anyone” can do it—and anyone can, indeed, share out information that’s never vetted for quality and never tied to measurable practice – why can’t AI? That’s what managers are thinking.

2. HARD to replace: Effective presentation, relevant knowledge assessments, and bulletproof documentation.

The most efficient way to enable skilled workers to “self serve” training is to:

- Present information (live lecture or video) that’s clear, concise, complete, and well-organized enough to enable learners to perform a skill or task they’re already somewhat familiar with. The purpose of this presentation piece is to introduce information, motivate learners, telegraph “gotchas,” set expectations, reinforce important points, and show learners how to self-serve (i.e., how to access and navigate reference documentation).

- Administer scenario-based assessments that measure learners’ ability to apply knowledge accurately to theoretical situations.

- Follow up with clear, concise, up-to-date, step-by-step, accurate reference documentation that learners can access at point of need.

Note that it takes top-notch professional-level skills to create effective presentations, relevant assessments, and documentation well-written (or, in the case of video, well-produced) enough to drive results. It requires human beings who know how to research processes that are often undocumented, incorrectly documented, in flux, and misrepresented (knowingly or unknowingly) by SMEs. It requires human beings with the tact and stamina to conduct interviews across politically-charged departmental lines, and to perform multiple walk-throughs and review rounds of each process to be trained.

Note, too, that because skills training often includes a proprietary component, is nearly always extremely detailed, and must be consistently maintained over time, AI typically isn’t a good fit.

Indications your organization is taking this Kirkpatrick Level 2 approach:

- While they are required to think through how they would perform in an hypothetical work setting, learners actually test their newly acquired knowledge in an actual, authentic setting with live data and customers.

- The performance failures that occur are minimal, and investigation suggests they’re largely related to learner confidence level, accuracy, and speed.

- New hires exhibit rapid skills improvement, while tenured roles tend to perform at a high level overall.

- While management in general seems to value training, the training department can’t prove, in quantitative terms, how or even if their work affects the business’s bottom line.

3. IRREPLACEABLE: Add authentic practice sufficient to build and measure skills.

Adding performance assessments to #2 is the brass ring. Effective presentation, bulletproof documentation, and authentic practice sufficient to build skills, measure performance, quantify improvement, and tie improvement to the bottom line is the holy grail of training and constitutes an irreplaceable, mission-critical component of business success.

Unfortunately, many organizations struggle to implement this approach for two reasons:

- There are only two ways to provide sufficient authentic practice, and both of them are expensive to implement and maintain: 1) Realistic mocked-up interactive scenarios in sufficient quantity to drive proficiency and recall (think dozens vs. one or two), and 2) A staging server or sand box accompanied by a formal testing program.

- Getting the metrics we need to prove ROI is often a challenge. Let’s say we do have a way to provide our learners enough realistic practice to know we’re releasing them into the wild with improved skills. We still need to calculate the value of those improved skills on the business. Did our training shorten our product development lifecycle? Did it result in better customer service that translated to fewer lost customers and higher sales? More effective sales tactics that resulted in more new customers? This piece is difficult because, even if we have someone on staff smart enough to figure out what data points we need to look at to prove training moved the needle, we still need to figure out how to access that data. And pulling the right data that can be a challenge. because many organizations rely on a patchwork of systems that don’t talk to each other.

AI is incapable of providing sufficient authentic practice (which often requires access to an organization’s secure software systems and proprietary processes). And for the same reasons that managers struggle, AI is also incapable of mining an organization’s systems to gather and analyze relevant data.

Signs your organization has been able to implement this approach:

- You’re able to measure and validate learner performance on new or revised processes before your learners are let loose to practice on your live data/clients. (Kirkpatrick Level 3)

- You’re able to keep training aligned with the nearly constant incremental system and process improvements that define today’s corporate landscape.

- You’re able to quantify the ROI of training on your organization’s bottom line. ( Kirkpatrick Level 4)

The bottom line (TLDR)

Historically, many corporations assumed training was valuable because it made intuitive sense. The past couple of decades have seen a lot of changes to the education and training spaces, though, culminating in a climate where some managers are seriously questioning whether third-party, unvettable algorithms can replace nuanced, high-quality training conducted by skilled, knowledgeable professionals.

Ultimately, the biggest fallout from management’s decision won’t be the pennies they (may) save; it will be the long-term effects of their decision on business-critical data, processes, products, customers, and the workforce.

Until then, it can’t hurt us—or them—to level up and prove our value in dollars and cents.

What’s YOUR take?

Do you have a different point of view? Something to add? A request for an article on a different topic? Please considering sharing your thoughts, questions, or suggestions for future blog articles in the comment box below.

Leave a comment